(Note: I apologize for how long it has taken me to get here. Conferences, classes and life in general conspired to get in the way this week. For the patient, here is the last in this series of posts...)

Part 1: Introduction

Part 2: What Makes A Good Method?

Part 3: Bayesian Analysis (#5)

Part 4: Intelligence Preparation Of The Battlefield/Environment (#4)

Part 5: Social Network Analysis (#3)

Part 6: Multi-Criteria Decision Making Matrices/Multi-Criteria Intelligence Matrices (#2)

Analysis Of Competing Hypotheses (ACH) is probably the best-known intelligence analysis method today. Invented by Richards Heuer over 30 years ago and made famous in his intelligence classic, the Psychology of Intelligence Analysis, ACH is widely taught and conceptually easy even for entry-level analysts.

In addition, it was specifically designed to work in all kinds of situations with any kind and quality of data. What is less clear is that the method produces unequivocally better estimative results. While the method is rooted fundamentally in the scientific method, studies testing the value of the method as a way to improve forecasting have been few and the results have been mixed (In no study have the results been worse than without the method but some studies have shown that the method only helps certain subsets of analysts. For a good list of these studies see the Notes List at the end of the ACH article on Wikipedia).

I am not sure why this is so. My own impression is that a well done ACH provides a better estimate in less time and with more nuance than virtually any other method available.

We teach ACH here in our freshman classes. I see many, many students struggle not with the basic concepts of ACH but with the details. I see countless examples each year of student projects where they have improperly executed the method (in much the same way a student gets their first attempts at a calculus or chemistry problem incorrect).

In most cases, it is fairly easy to correct the mistakes and the students rarely have a problem seeing what they did wrong or in making the appropriate adjustments. It is less clear to me that, at this early stage in their education, they are able to transfer this knowledge from one type of problem to another, however. We try to reinforce all our methods in upper level classes but the opportunities for reinforcement in the real world are slim (we rarely find, for example, that students are required to use structured methods in their internships).

My own instincts tell me that ACH (and many of the experiments involving it -- including our own) is a powerful method but won't get a fair test until such a test is done with analysts who have worked with the method on multiple problems and in multiple circumstances. To be honest, I suspect that this is true with all of the methods I have discussed in this series. Deliberate practice seems to be a key component of expertise in multiple other fields and I imagine this is true when it comes to intelligence analysis methods as well.

Improving the quality of the final estimate is only one (albeit an important) way that a method should contribute to a quality intelligence product, however. ACH brings much more to the table in my estimation and it does this immediately, in even the earliest projects.

ACH can help the analyst at every stage of the problem, including modelling, collection and collection planning, and preparing a document for dissemination. It is a wholly transparent method and can very easily be used collaboratively. Its transparency is also crucial in helping instructors or managers identify problems in the analysis of the data. The transparency is also of enormous benefit in understanding and improving the analytic process after the fact as well. It integrates extremely well with various data resources and is very suitable for automation. We find that it is actually faster to use, particularly in a group setting, than most other methods (including intuitive analysis).

The way ahead is a little different here than with the other methods. We think we have a pretty good handle on how to teach ACH. The key, in my estimation, is to create opportunties to reinforce that teaching in and outside the confines of the classroom.

Friday, December 19, 2008

Top 5 Intelligence Analysis Methods: Analysis Of Competing Hypotheses (#1)

Posted by

Kristan J. Wheaton

at

9:00 AM

3

comments

![]()

Labels: ACH, analysis of competing hypotheses, intelligence, intelligence analysis, intelligence methods, list

Wednesday, December 17, 2008

Jigsaw Demo: A Powerful, New Visual Analytics Tool (YouTube)

Yesterday, I posted my initial reaction to an extraordinary piece of software called Jigsaw I saw demo-ed during my visit to GA Tech for the Workshop on Visual Analytics Education sponsored by NVAC. Today, John Stasko and his team uploaded a video of the software in action that is a must-see.

I understand the video is a little old so some of the functions I saw are not in this video. The good thing about John and his band of software wizards, though, is that they are constantly improving the product.

Posted by

Kristan J. Wheaton

at

3:12 PM

0

comments

![]()

Labels: Jigsaw, Resource, software, visual analysis

Tuesday, December 16, 2008

Amazing Data Visualization Tool From The Geniuses At GA Tech (Jigsaw)

(Note: I am at a visual analysis conference sponsored by the National Visualization And Analytics Center so the last of the Top 5 Methods list will have to wait a day or two. In the meantime, I have found something else that is pretty cool...)

John Stasko and the computer scientists at the Information Interfaces Lab at Georgia Tech may not have found the Holy Grail of visual analysis but they have come pretty darn close with their Jigsaw product.

This extraordinary visualization tool automatically extracts entities (names, places, dates, etc.) from plain text documents. Then, it automatically creates a visualization of the relationships between those entities and the documents containing them. The screenshots below do not do it justice (I hope to have a video of the product in action within a couple of days, though).

The program is fully customizable so you can add or delete data, designate entities or create relationships to modify what the automatic entity extractors come up with.

The real power of the tool comes into play after the data is in the program. You can play with it in a variety of powerful and interesting ways all accessible through a drop dead easy user interface.

The software is continuously improving. On the horizon is the ability to use web input and there is a long analyst generated to-do list that the grad students at GA Tech are cranking through one at a time.

The software runs on a desktop and was developed with a DHS grant so government and academics should reach out to John for a test copy. GA Tech is also home of the Visual Analytics Digital Library and well worth checking out.

Posted by

Kristan J. Wheaton

at

9:08 AM

0

comments

![]()

Labels: Jigsaw, Resource, software, visual analysis

Friday, December 12, 2008

Top 5 Intelligence Analysis Methods: Multi-Criteria Decision Making Matrices/Multi-Criteria Intelligence Matrices (#2)

Part 1 -- Introduction

Part 2 -- What Makes A Good Method?

Part 3 -- Bayesian Analysis (#5)

Part 4 -- Intelligence Preparaton Of The Battlefield/Environment (#4)

Part 5 -- Social Network Analysis (#3)

Multi Criteria Decision Making (MCDM) is a well-known and widely studied decision support method within the business and military communities. Some of the most popular variants of this method include the analytic heirarchy process, multi-attribute utility analysis and, in the US Army, at least, the staff study (see Annex D). There is even an International Society on Multiple Criteria Decision Making.

At its most basic level, MCDM is used to evaluate a variety of courses of action against a set of established criteria (see the image below for what a simple matrix might look like). One can imagine, for example, considering three different types of car and evaluating them for criteria such as speed, cost and fuel efficiency. MCDM would suggest that the car that has the highest total rating across those three categories would be the best car to buy. In fact, Consumer Reports uses exactly this type of method in its famous "circle charts" of everything from cars to hair care products.

MCDM is flexible and works with a wide variety of data but there are numerous devils in the details of its implementation. The simple example above gets increasingly complicated when we start to examine the many other criteria that someone might use to evaluate a car. Likewise, there are problems with rating each of these criteria (Is a car that gets 27 miles to the gallon really worse than a car that gets 27.1 miles to the gallon?). Even worse is when the analyst starts to think about abstract evaluation criteria such as which of the three cars is "coolest"? You start thinking like this and you begin to understand why they have an international society dedicated to this method...

MCDM is, at its heart, an operational methodology, not an intelligence method, however. That said, we have had very good luck “translating” it into an intelligence analysis method (i.e. MCIM) in our strategic intelligence projects. These projects have covered the gamut from large-scale national security studies to small-scale business studies. The matrices can be simple (I actually used such a matrix to evaluate the 5 methods mentioned in this series) or enormously complex (The MCIM matrix on the likely impact of chronic and infectious disease on US national security interests clocks in at 15 feet long when printed).

The key difference between the operational variant and the intelligence variant is perspective. In the operatonal variant, the analyst is trying to figure out his or her organization's best course of action. In the intelligence variant, the analyst puts him or herself in the shoes of the adversary and attempts to envision how the other side might see both the courses of action available and the criteria with which the adversary will evaluate them. The intelligence variant has not, to the best of my knowledge, been validated but we have a grad student working on it.

The research agenda for this method (as with many of the other methods discussed so far) is straightforward. First, it has to be validated as an intelligence specific methodology. The anecdotal evidence and the evidence in the operational literature is good but further testing needs to be done. Second, analysts need to figure out which variants of MCIM work best in which types of intelligence situations. Finally, we need to get the method out of the school house and into the field.

Next Week: #1!

Posted by

Kristan J. Wheaton

at

10:59 AM

1 comments

![]()

Labels: intelligence, intelligence analysis, intelligence methods, list, MCDM, MCIM

Thursday, December 11, 2008

Top 5 Intelligence Analysis Methods: Social Network Analysis (#3)

Part 1 -- Introduction

Part 2 -- What Makes A Good Method?

Part 3 -- Bayesian Analysis (#5)

Part 4 -- Intelligence Preparation Of The Battlefield/Environment (#4)

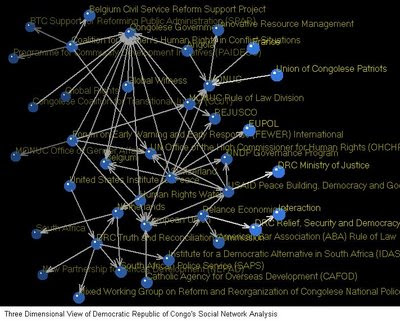

Social Network Analysis (SNA) is fundamentally about entities and the relationships between them. As a result, this method has a number of variations within the intelligence community ranging from techniques such as association matrices through link analysis charts right up to the validated mathematical models. It is most commonly used as a way to picture a network, however, and is rarely used in the more formal way envisioned by the sociologists who created the method. In other words, while SNA is a very powerful method, intelligence professionals rarely take advantage of its full potential.

Because it is primarily a visual method, most analysts (and the decisionmakers they support) immediately grasp the value of the method. Likewise, some variation of SNA will likely work with any data set where the entities and the relationships among those entities are important (in other words, almost every problem). Parsing all the relevant attributes can be difficult, however, and there are few automated solutions that work well with unstructured data sets. Likewise, at the higher levels of analysis, where the analyst is trying to do more than merely visualize a network, a good bit of special knowledge is required to understand the results.

Talking about SNA doesn't make a lot of sense though. It is much easier to grasp its value as an intel method by looking at some examples. I2's Analyst Notebook is widely available and examining their case studies is helpful in seeing what that tool can do. Another widely used tool, particularly for more formal analyses, is UCINET. For example, some of our students used this tool last year to examine the "social network" of government and non-governmental organizations engaged in security sector reform in sub-Saharan Africa (the image above comes from their study). Recently, my students and I have been playing around with a new tool from Carnegie Mellon University called ORA. It is very easy to use and very powerful.

Of the 5 methods I intend to discuss, SNA is the one with the widest visibility in all three major intelligence communities (national security, business and law enforcement). As such, there is already a good bit of research activity into how to better use this method in the intelligence analysis process. The big challenge, as I see it, is to design educational programs and tools that help analysts move away from the "pretty picture" possibilities presented by this method and toward the more rigorous results generated by the more formal application of the method.

Tomorrow: #2...

Posted by

Kristan J. Wheaton

at

11:37 AM

5

comments

![]()

Labels: intelligence, intelligence analysis, intelligence methods, list, SNA, social networks