A few months ago, I wrote an article on the Top 5 Things Only Spies Used To Do (But Everyone Does Now). In that article I stated that one of those things (the #2 thing, in fact) was to "run an agent network."

I equated our now everyday activity of finding and following various people on LinkedIn or Twitter to the more traditional case officer activities of spotting, vetting, recruiting and tasking agents.

While I meant that article to be a bit lighthearted, over the last several months I have been exploring this idea with some seriousness in a class I am teaching with my colleague, Cathy Pedler, and a group of very bright grad students.

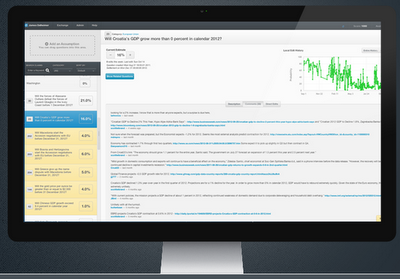

The picture above gives you an inkling of the progress we have made.

In this class (called Collaborative Intelligence - "How to work in a group while learning how groups work"), we have focused our energies on

critical and strategic minerals. I have already written about this course (if you want more details

go here), but suffice it to say that, recently, we decided to use our new-found skills in social network analysis to see if we could solve a traditional HUMINT problem: "Who should we recruit next?"

Every

case officer knows that their agents' value are not only measured in terms of

what they know but also in terms of

who they know. Low level agents with an extensive network of contacts within a targeted area of interest are obviously valuable, perhaps even more valuable than the recluse with deep subject matter expertise.

Complicating the case officer's task, however, is the jack-of-all-trades nature of the traditional HUMINT collector. Today, the collector needs to tap into his or her agent network to get economic information; tomorrow, political insights; the next day the need is for information to support some military or technological analysis.

Only an expert case officer with deep contacts can hope to be able to respond to the wide variety of requests for information. In today's fast moving, crisis-of-the-day type world, the question becomes "Where can I find good sources of information ... on this particular topic ... quickly?"

Twitter to the rescue!

You see, the image I referred to earlier began as the 11 lists of Twitter users the 11 students in my class were currently following

as they studied critical and strategic minerals. The students had found these Twitter users the old fashioned way - they bumped into them. That is, they found them on blogs or in news articles that talked about strategic mineral issues and they followed them on Twitter in order to stay current on their postings. Since each of the students has a slightly different portfolio (the students are broken into three teams, national security, business and law enforcement and then, within those teams, each student has an area of specific interest), their lists have some common sources but many different ones as well.

The natural next question is, "Who are my sources of information following?" Using

NodeXL to collect the data and

ORA to merge, manage and visualize it, the students rapidly discovered who their "agents" were following. Furthermore, we were able to discover new people to follow -- Twitter users that many people on our initial lists were following (implying that they were potentially very good sources of information) but that the students had not yet run across in their research.

The picture got even more interesting when we merged the results from each of the students. Once we cleaned up the resulting picture (eliminated pendant nodes, color coded the remaining Twitter users by team, etc), the students had identified over 50 new sources of information -- Twitter users who were posting information relevant to the issue of strategic minerals and vetted by many of the Twitter users we had already identified -- that we had never heard of. You can see this more focused set of Twitter users in the image below.

While this sounds exciting (and it was, it was...), trying to listen to over 50 new voices seemed to be a bit overwhelming. The question then became, "Of these 50, which are the 'best'?"

The traditional answer involves following all of them and then, over time, sorting out the wheat from the chaff. Most people don't have that kind of time; we certainly didn't. We needed another way to sort them and, thankfully, Twitter itself provides some potentially useful answers.

The first answer, of course, is to look at the number of "followers". This is the number of Twitter accounts that claim to follow a particular person or organization. In general, then, the sheer number of people who are following a particular person is a rough measurement of their influence and, by consequence, importance to a conversation on a particular topic.

Most twitterati don't put much credence in gross tallies of followers, though. Anyone with a twitter account knows that only a relatively small number of their followers are actively engaged with the medium. Some studies have also indicated that a third or more of these

followers are fake or, even worse,

bought and paid for. While this is typically true on some of the most widely followed accounts and is significantly less likely to be true among the people who are tweeting about rare earths, for example, it is still a cause for concern.

Twitter again offers a solution to this problem but it takes a little work to get it. The key is Twitter's List feature. Twitter allows users to create lists of people; subsets, if you will, of the larger group of people a particular user might follow. For example, I have a list of competitive intelligence librarians (there are actually quite a few on Twitter). Lists are a way for people to follow hundreds or thousands of people but narrow and focus that chorus in a way that is most useful for them. It allows the savvy Twitter user to filter signal from noise.

Twitter allows a user to not only look at their own lists but to know how many lists other people have created with their name on it. This is important because it takes time and effort to create and curate a list. It is almost certain that you have not been placed casually on a list. Being placed on a list is an indicator of credibility; being on lots of lists even more so. Like followers, though, the number of lists is still pretty rough and does not give the best sense of the value of a particular Twitter user to his or her followers. Thus, while the number of lists you are on is not a bad indicator, many people like to use the list-to-follower ratio to assess overall credibility.

In other words, if you had 1000 followers and every one of them had placed you on a list, you would have a list-to-follower ratio of 1. If only 500 had placed you on a list, then your list-to-follower ratio would be .5. In practice, list-to-follower ratios of .1 are rare. Based on my experience a list to follower ratio of .05 is very good and a list to follower ratio of .03 or lower is more typical.

While I am certain that there are automatic ways to collect the data you need from Twitter, we simply crowdsourced the problem. Dividing the list into 11 pieces, we were able to quickly and accurately collect and deconflict the various data we needed including number of lists and number of followers. In the end, we were able to rank order the 50 top Twitter users talking about Strategic Minerals in a variety of useful ways. In all, including the teaching, it took us only about 6 hours to get from start to Top 50 list (For the complete list and more details

go here)..

And here is where the analogy breaks down...

Up to this point, we were able to fairly confidently connect traditional HUMINT ideas and activities with what we were doing, much more quickly, using Twitter data. The analogy wasn't perfect but it seemed good enough until we put the students -- the "case officers" -- into the network. They stuck out like sore thumbs!

Case officers in traditional HUMINT networks need to be working from the shadows, pulling the strings on their networks in ways that can't be seen or easily detected. Trying to lurk on Twitter in this sense just doesn't work, however. My students, who are following many people but are not followed by many, became very obvious as soon as they were added to the network. The same technology that allowed us to rapidly and efficiently come up with a pretty good first cut at who to follow on Twitter with respect to strategic minerals, allows those same people to spot the spammers and the autofollow bots and the lurkers and even the "case officers" pretty easily.

Back in my Army days we used to say, "If you can be seen you can be hit. If you can be hit, you can be killed." Social media appears to turn that dictum on its head: If you can't interact, you can be spotted. If you can be spotted, you can be blocked.

It turns out, it seems, that the only way to be hidden on Twitter is to be part of the conversation.

.jpg)